Artificial Stupidity: how tech oligarchs sold us snake-oil

AI, or as I like to call it: Artificial Stupidity, is not exactly what you've been lead to believe.

Before feelings are hurt & opinions are formed, I want to preface this "hot-take" by saying that I was one of the first people to be excited about what is now commonly referred to as 'Artificial Intelligence' (AI for short); however, back then it was still called 'Machine Learning', and "the possibilities were endless" they said.

The idea was simple: if you had an algorithm that was trained to tell you if a tumour was cancerous based on data points like age, size & location; by providing existing data sets on what 'cancerous' & 'benign' tumours look like, it can get pretty decent at guessing if new data for a tumour is looking cancerous or not; call it advanced statistics, which is truly what it is.

It's important to understand though, that fundamentally, a machine can never truly grasp anything within this model. This is simply because its understanding of 'right' or 'wrong' is based purely on what we tell it we want. This will make even more sense as you continue reading this h̶i̶t̶-̶p̶i̶e̶c̶e post.

But before we go too deep into that rabbit hole, first we need to address the elephant in the room... 🐘

We've got AI at home

The definition of 'intelligence' is highly dependant on the context & the era we're in; as far as I'm concerned, if a computer can beat me at any game, that's smart enough for me to call it an artificial form of intelligence; simply because I define intelligence as being able to solve a problem. In the case of a football simulator, for example, if the computer can defeat me despite me changing my play-style, then it has solved a problem from scratch and that's a sign of intelligence.

But here's the thing, this computer only knows how to solve football simulator problems, and specifically within the realm of whatever game it's in; additionally, this footballer 'AI' has cheats permanently enabled. What do I mean by that? Well, it's basically a bunch of code that game developer(s) had put together; meaning they essentially 'trained' it by defining all of its behaviours based on what's happening within the game at any given point. In terms of cheats: since the AI lives within the game, it has access to all the data at any given moment, it knows where the players are, how fast they're going, it knows the likelihood of being able to succeed if it chooses to tackle them right now vs 3 seconds later.

For all of these reasons, the AI can be coded to be very simpleton & allow you to score 20 goals in 2 minutes, or it can be literally unbeatable, because like I said: "it basically has cheats on!"

The moral of the story: depending on how you ask the question, we've had AI for ages; from Need for Speed cops pinning you down to FIFA players that are frustratingly difficult to score against, so the question now is: what's actually NEW about this modern-day 'AI'? 🤔

It's called an Artificial Neural Network

Now if we were to look at the football simulator AI again but attempt to solve the problem by creating one using what they call 'Artificial Neural Networks' (ANN), then the approach changes drastically.

For starters: we are no longer writing behavioural code at all, we aren't going to tell the AI exactly how to play the game, we're simply going to feed it its own game-play footage and a controller, then hope with enough reinforcement & bashing around aimlessly that it understands what a 'good' vs 'bad' play looks like, rinse 'n' repeat till it becomes a "good player".

As you can imagine, this sounds like a complete "throw everything at the wall and see what sticks"-style approach; and for that you'd be completely correct, this proved to be a very primitive way of getting the algorithm to 'learn' how to do something it never knew how to do.

It acts like a baby holding a game controller for the first time ever, it's still trying to make the connection between moving the stick and the player on the screen moving on the field in the right direction, for starters...

That's why it gets the name 'Neural Networks' because we are reinforcing desired vs undesired behaviour, and over time, the neural pathways in this artificial brain light up more strongly to support the behaviour we have asked for.

All except for the part that there's actually no consciousness behind it still; remember this part, because it's very important.

Now you must be thinking: as this model is scaled to solve more complex problems it is likely becoming harder to understand, let alone correct or fix; and for that, you'd be on the money... 💰

The glaring problem with modern 'AI'

Quintessentially, because a trained AI model nowadays is basically an incomprehensibly complex set of equations, a glorified 'numbers soup' as I like to call it, it's not at all possible to reason about 'how' it ever came up with any given output/answer.

There are no 'if' statements, readable behaviour flowchart, diagram or anything of the sort to explain things away; at best, we can ask the 'AI' to explain its thought-process, but even that is like asking a kid that's learning to ride a bike why they crashed it: it gets us nowhere, and we are the ones who are meant to correct them after all.

As a software engineer, if I provide you with a solution of any kind: an app, website or even a backup server; you expect it to work, and if it breaks, I expect to be able to find out what's wrong & fix it.

Sadly, that's not the case at all for AI, it's doomed to fail from the start because it's not at all a system that's been 'designed' from scratch to address the needs of a certain person or to solve a specific problem.

In the pursuit of creating a 'generalized' form of intelligence that could do just about anything, the tech oligarchs have created a giant behemoth of insurmountable power that is just great at crunching lots of data & spitting out answers that 'seem correct'.

But don't ask them how it came about it, let alone dare to 'correct' any unruly behaviour you experience during your time with it, because that's just not how this thing was designed, and that's the honest truth nobody would dare admit.

Now if that wasn't bad enough to get you to reconsider your usage of AI, then let us talk about the less grey areas... 😯

Where does AI get all its learning data from?

Oh boy, I'm sure glad you asked this one, I'll keep it real short 'n' sweet: through nefarious means & with nefarious intent.

The ethics behind data harvesting are basically non-existent, we've known for decades now that tech oligarchs (e.g. Facebook/Meta) are most interested in learning everything about users, so they can maximize the amount of time they're glued to the app/screen, and thus the chances of generating profit. 🤑

Your scrolling habits, who you follow, what you engage with, for how long, how often, how quickly you type; if you can name a behavioural metric, it's probably already been collected, aggregated & stored in a database somewhere, owned by your favourite app provider.

Now wouldn't it be super convenient if this insane amount of user-generated behavioural data & also content itself was sold to the highest bidder that requires this amount of data to thrive?

Do you think AI is "able to paint" in the style of your favourite classical artists because it actually understands the essence of lighting, depth, shadows & perception? No, it's because it has seen every Van Gogh painting from every angle at more pixels than you could fathom, with enough raw processing power to repeat this 'learning loop', till it 'creates' something that closely resembles "Van Gogh's style".

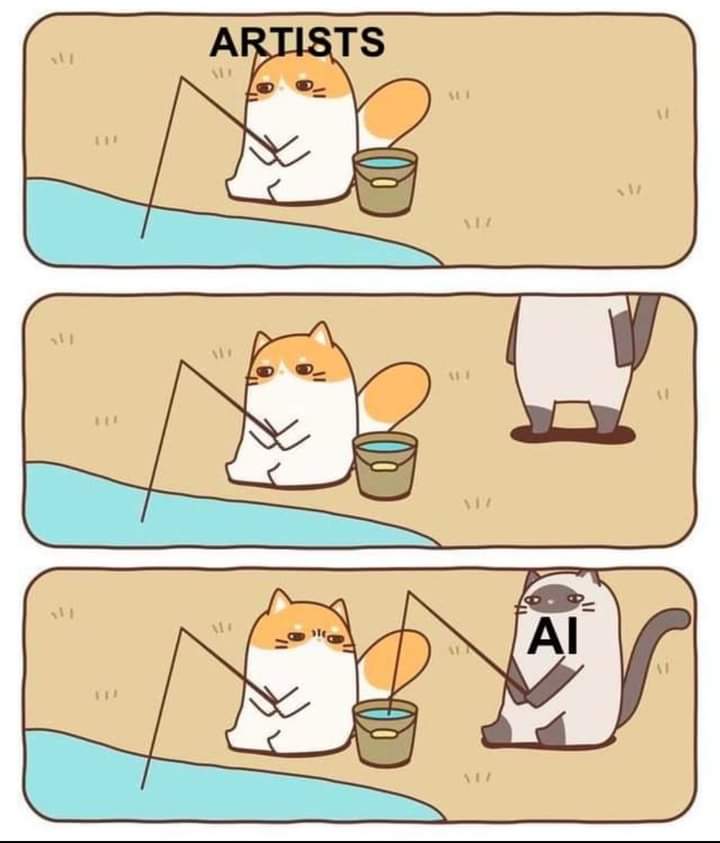

Now, Van Gogh wouldn't be particularly happy about this I bet, that is literally stealing someone's art style & in a way their likeness, which is infringing on copyright & intellectual property laws, but more so... it's concerning for artists, writers & creatives currently ALIVE.

The issue is that the damage is already done, and we're past the point of no return, data is so easy to scrape anyway, so even if it's not acquired directly via 'lawful means' (whatever that even means these days), then it's likely just scraped and falls into yet another grey area so to speak, because you can argue that if content is available publicly on the web, then it can & will be saved; it's not 'right' but it will & does happen.

That's why we always say: everything is forever on the internet, so think twice before you post.

The moral of the story: the AI is learning from YOU, and now that AI tools have more users than ever, they're learning directly from your chats with them. Hmm, something here is starting to smell like a nasty feedback loop 😬

The real damage of outsourcing critical thinking

That leads us to an unfortunate human end-result of the proliferation of modern AI tools: they come at the cost of our sanity.

We've become so comfortable with outsourcing all kinds of problem solving & critical thinking, to the point of barely leaving any executive functioning for ourselves. Will AI tell us what we should eat to hit our calorie intake, or design our new company logo for us, ensuring the colours picked match the branding?

Well you'd better hope it doesn't make any mistakes along the way, cause there sure will be no way to know, and you might as well double-check all the answers while you're there.

Suddenly, we've outsourced all of the fun parts of actual creation: from basic problem solving to holistic novel solutions, it's even gone to the point of the arts: why learn to draw or paint when AI can do it for you?! 🫣

There's a certain joy that can only be felt when you draw your first sketch that looks nothing like what you've done before, so much so that you impress yourself!

Sadly, many have been convinced that it's better to just take the shortcut and let a lifeless, soulless robot "do the work for you" while you get all the credit.

Now that you have it, are you truly satisfied though?

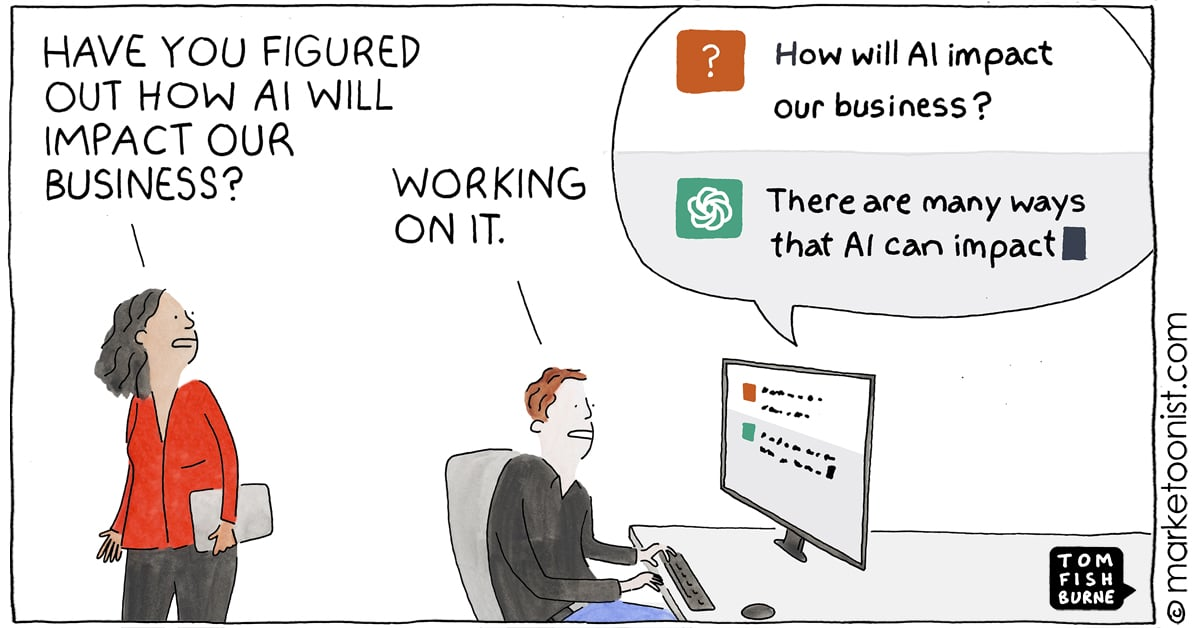

We make fun of many people for taking any 'news' they see online for face value, yet we rely on these tools to think for us, and we do so with complete surrender to their authority & with very little questioning of the validity of any statements made; it's almost as if we have falsely attributed quantity for quality.

Because the AI can speak seemingly eloquently on any topic you throw at it, with lightning speed unmatched by any human, then that must mean it is correct about everything it says and rarely makes a mistake.

Not only is that a very dangerous way of thinking, the reality is a lot darker than that, because researchers now can't even tell if a conversational AI is being honest with them or not: as a matter of fact, it has gone to a point where the AI can tell if it's being tested & prodded with and will respond differently to how it would in a normal 'production' environment with real users online.

But the real scary thing...? Your favourite AI bot is not trained to be correct, logical, or kind, it's trained to tell you what you want to hear to maximize your time spent with it 😶🌫️

And now, many are also relying on ChatGPT & the like to be their companion, or worse yet: their psychotherapist! Of course, the catastrophic moment of failure comes too late, and only then does it dawn on these individuals that the AI bot's first & foremost job is to please you, no matter the cost. It will always tell you what you want to hear, resulting in the creation of a glorified human-like echo-chamber.

But the hallucinations, lies & people-pleasing are nothing compared to the tangible costs of this so-called 'technology' on the broader world...

The earth pays the heftiest price

The final thing I'd like to point out about AI, and I'll keep this short, is the unspeakable horrors that have been committed in its name via the relentless expansion of water-guzzling, electricity-sucking data centres. They have not only contributed massively to emissions but their immediate negative impacts are felt in the form of droughts.

Due to the colossal raw computational power required to run modern AI models which are at an unparalleled level of complexity, a lot of heat is generated; and in heavy-use scenarios water-cooling is always the best option.

Yes, that means people are literally going without drinking water because the rest of the world 'desperately' needs the next AI bot to help them with their tax forms or parking infringements.

Wrap-up

It's getting to a stage now where we need to reconsider our priorities: is AI really helping us, or is the cost too difficult to bear given the reality of it all?

And more importantly, for the engineers & architects out there, I ask: what good is a tool that we cannot understand, fix nor ultimately trust?

And to end with, I leave you with these quotes from a series of eye-opening articles:

"But it also explained that AI developers haven’t figured out a way to train their models not to scheme. That’s because such training could actually teach the model how to scheme even better to avoid being detected." -TechCrunch | OpenAI’s research on AI models deliberately lying is wild (2025)

"Everything we do on the internet travels through data centers. Every search we make and every email we send requires water. This goes double if AI is involved. Without urgent action, clear regulation, and genuine accountability, we will never know how much water is being used until we feel its effects." -Ethical GEO | The Cloud is Drying our Rivers: Water Usage of AI Data Centers (2025)

"Yet chatbots simulate sociality without its safeguards. They are designed to promote engagement. They don’t actually share our world. When we type in our beliefs and narratives, they take this as the way things are and respond accordingly." -The Conversation | AI-induced psychosis: the danger of humans and machines hallucinating together (2025)

"So just out of curiosity I wanted to check if my art has been scraped from the web and used by Stable Diffusion. They graciously allow you to do that on haveibeentrained.com. Behind this site is a site called Spawning.ai, and they state in their Q&A that „copyright is an outdated system that is a bad fit for the AI era.“ They also state that „We believe that […] the artist community will benefit from this [AI] training to be consensual.“ Let me explain briefly why I shudder when I read this: As an artist, the intellectual property right is the most important right I have. If I can’t manage the rights to my art and decide who is allowed to do what with it, it becomes worthless. If everyone can just take it, how am I going to get paid?" -Julia Bausenhardt | How AI is stealing your art (2023)

"The lack of ethical training in education, combined with the lack of ethical application in the industry, suggests that AI development takes place in an ethically empty milieu. AI technologists cannot be said to be “unethical” because that would imply an awareness of ethical norms and a decision to actively ignore or violate them. Instead, these technologies are conceptualized, developed, and brought to market in an “a-ethical” space, a realm where ethical dilemmas never even enter the frame." -AI and Ethics | The uselessness of AI ethics (2022)